The era of “Shadow IT”, where employees secretly used unauthorized SaaS apps, has evolved into something far more volatile. In 2026, we have entered the age of the “Shadow Agent.” Driven by a phenomenon known as “vibe coding,” employees are now bypassing traditional development lifecycles to build, deploy, and run autonomous agents that interact with sensitive corporate data, often without a single line of human-reviewed code.

For business leaders, this represents the ultimate “Autonomy Paradox.” While these agents provide a massive boost to individual productivity, they create a silent, unmapped layer of risk that traditional security tools are fundamentally blind to.

What is Vibe Coding?

Coined by AI researcher Andrej Karpathy, vibe coding refers to a shift where the primary role of a developer moves from writing syntax to “guiding a vibe.” By describing a desired outcome in natural language, users can generate fully functional applications and autonomous agents. As Google Cloud recently detailed in theirtechnical guide to vibe coding, What is vibe coding?1 the process allows even non-technical staff to “forget that the code even exists,” focusing purely on the iterative loop of prompting and refining.

The danger arises when these “vibe-coded” tools are granted access to the enterprise. Unlike a static spreadsheet, a vibe-coded agent is dynamic; it can browse the web, call APIs, and even install its own dependencies. When these agents are deployed without oversight, they become “Shadow Agents,” autonomous entities operating in the dark.

The Security Time Bomb: 45% Failure Rates

The speed of vibe coding often comes at the expense of security due-diligence. Peer-reviewed research published in early 2026 indicates that “self-generative vulnerabilities” are a major concern for industrial applications. A study published in Applied Sciences, Enhancing Security in Industrial Application Development: Case Study on Self-Generating Artificial Intelligence Tools2 demonstrates how AI models like ChatGPT can generate database structures and server-side code (PHP) that lack essential security measures, specifically highlighting risks like SQL injection.

To learn more about how these vulnerabilities are exploited, we have previously examined the mechanics of Agentic Red Teaming.3 In that analysis, we highlighted how AI agents can inadvertently create “ghost permissions,” elevated access rights that stay active long after a task is finished. When a vibe-coded agent is built with a “make it work at all costs” mentality, it often defaults to “Allow All” permissions, effectively opening a back door for any adversary who can compromise the agent’s prompt logic.

The Rise of the Machine Identity Crisis

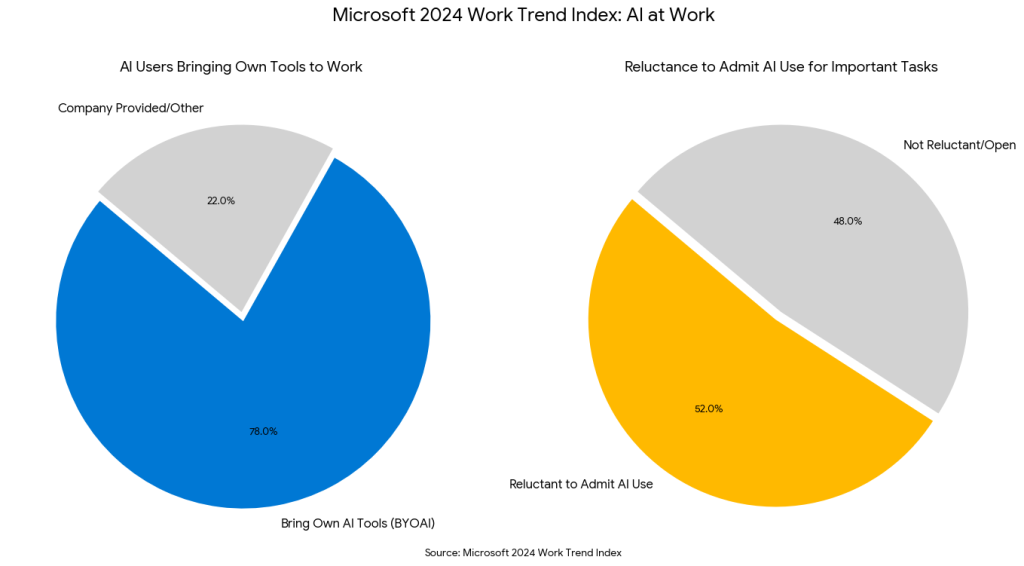

The scale of the Shadow Agent problem is difficult to overstate. According to the Microsoft 2024 Work Trend Index,4 78% of AI users are bringing their own AI tools to work. This “Bring Your Own AI” (BYOAI) trend is driven by employees who feel the need to maintain productivity despite a lack of official company guidance. Furthermore, 52% of employees are reluctant to admit they use AI for their most important tasks due to fears regarding job security and professional reputation.

This creates a massive identity crisis. In a traditional environment, IT manages human identities. In the agentic era, they must manage thousands of machine identities. As previously covered in our research on the Ethics of Automated Remediation,5 the risk isn’t just that the agent might fail, but that it might succeed in doing something it wasn’t supposed to do—like “fixing” a server by deleting a security protocol it perceived as a bottleneck.

Practical Implementation: Bringing the “Vibe” Under Control

Business leaders cannot simply ban vibe coding; the productivity gains are too significant to ignore. Instead, they must move toward a model of “Governed Autonomy.” Here are the actionable steps for IT and security professionals in 2026:

1. Implement Agentic Discovery

You cannot secure what you cannot see. Organizations must deploy real-time discovery tools that look for the specific traffic signatures of agent frameworks (like LangChain or AutoGPT) within the corporate network.

2. Shift-Left for Natural Language

Just as we scan code, we must now scan “vibes.” This means implementing prompt-filtering and “intent-analysis” at the point of creation. As we discussed in our guide to Agentic Red Teaming, testing an agent’s resilience to “indirect prompt injection” (where an agent is manipulated by data it reads) is now a mandatory requirement for any deployed tool.

3. Define Machine-Specific RBAC

Never give an agent a “User” profile. Agents should operate under a “Service-Level” identity with the absolute minimum permissions required for their specific task. If an agent only needs to read a CSV, it should not have the “vibe” to access the entire SQL database.

4. Continuous Remediation Oversight

As previously noted in our work on Automated Remediation Ethics, any agent capable of making changes to the environment must be subject to an “Ethical Guardrail” layer. This layer acts as an automated “Human-on-the-Loop,” checking the agent’s proposed actions against corporate policy before execution.

The 2026 Reckoning

The year 2026 is being described by analysts at Forrester6 as the “Year of Reckoning” for AI. The “vibe” is no longer enough; boards are demanding proof of value and proof of security. Organizations that fail to address the Shadow Agent phenomenon risk what some experts call a “Challenger-level disaster” (a catastrophic system failure caused by a core component that was vibe-coded but never truly understood by its human creators).

Emutare empowers organizations to embrace “vibe coding” safely by bridging the gap between rapid innovation and “Governed Autonomy”. Our AI Adoption and Technology Consultation helps you build secure infrastructure ready for agentic workflows. To counter “Shadow Agents,” we provide Cybersecurity Governance and Risk Assessments to map machine identities and enforce ethical guardrails. With SIEM and XDR Deployment, we ensure real-time visibility into unauthorized agent activity, while our Advisory services align your AI strategy with evolving regulations.

Conclusion

Vibe coding is the ultimate double-edged sword. It democratizes innovation, allowing a marketing manager to build a custom customer-service bot in an afternoon. But it also democratizes risk, allowing that same manager to accidentally expose the entire customer database to the public web.

By integrating the offensive rigor of agentic red teaming with the defensive discipline of ethical remediation governance, enterprises can embrace the “vibe” without falling victim to the shadow. The future of work is autonomous, but it must be an autonomy that is verified, validated, and, above all, visible.

References

- Google. (2025). What Is Vibe Coding?. https://cloud.google.com/discover/what-is-vibe-coding ↩︎

- Mateo Sanguino, T. J. (2024). Enhancing security in industrial application development: Case study on self-generating artificial intelligence tools. Applied Sciences, 14(9), 3780. https://www.mdpi.com/2076-3417/14/9/3780 ↩︎

- Emutare. (2026). Agentic Red Teaming: Using AI to Find Your Own Weaknesses. https://insights.emutare.com/agentic-red-teaming-using-ai-to-find-your-own-weaknesses/ ↩︎

- Microsoft. (2024). 2024 Work Trend Index Annual Report. https://assets-c4akfrf5b4d3f4b7.z01.azurefd.net/assets/2024/05/2024_Work_Trend_Index_Annual_Report_6_7_24_666b2e2fafceb.pdf ↩︎

- Emutare. (2026). The Ethics of Automated Remediation: When to Let the Machine Patch. https://insights.emutare.com/the-ethics-of-automated-remediation-when-to-let-the-machine-patch/ ↩︎

- Forrester. (2025). Predictions 2026: AI Agents, Changing Business Models, And Workplace Culture Impact Enterprise Software. https://www.forrester.com/blogs/predictions-2026-ai-agents-changing-business-models-and-workplace-culture-impact-enterprise-software/ ↩︎

Related Blog Posts

- Cybersecurity Essentials for Startups: Safeguarding Your Business from Digital Threats

- Insider Threats: Detection and Prevention Strategies

- Securing Microsoft 365 Email Environments: A Comprehensive Guide

- Crisis Communication During Security Incidents: A Strategic Approach

- Building a Security Operations Center (SOC): Key Components

- Implementing Single Sign-On: Pros, Cons, and Best Practices

- Backup and Recovery: Building Resilience Against Ransomware